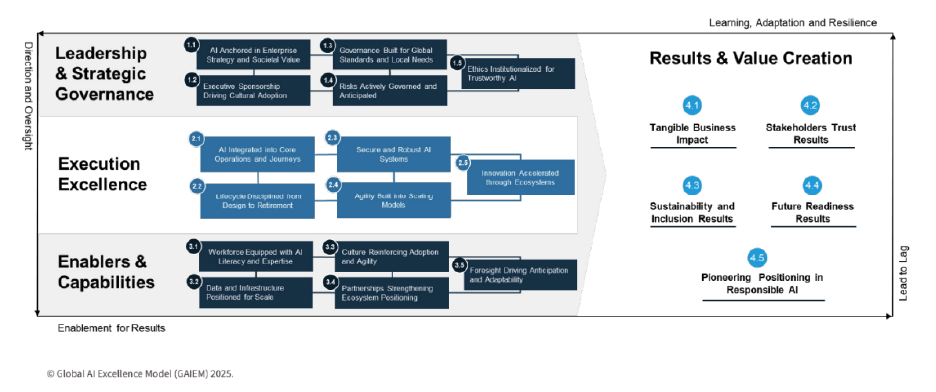

Global AI Excellence Model (GAIEM)

Strategic Lens: Responsible AI governance and system-level capability building

What It Is:

A cross-sector, cross-country AI readiness model co-developed with the Global Centre for AI Excellence (GCAIE) in the United Kingdom, aligning with global standards including the EU AI Act, NIST RMF, and ISO 42001.

My Role & Impact:

- Advisory Board Member, contributing to readiness standards, sector frameworks, and evaluation criteria.

- Supporting the future AI upskilling strategies for the Dubai Government and governmental entities in Jordan.

Why It Matters:

Helps governments and organisations shift from pilots to scalable, value-creating AI transformation.

Methods & Evidence:

Multi-pillar structure (Leadership, Execution, Enablers, Value Creation), benchmarked across global governance models.